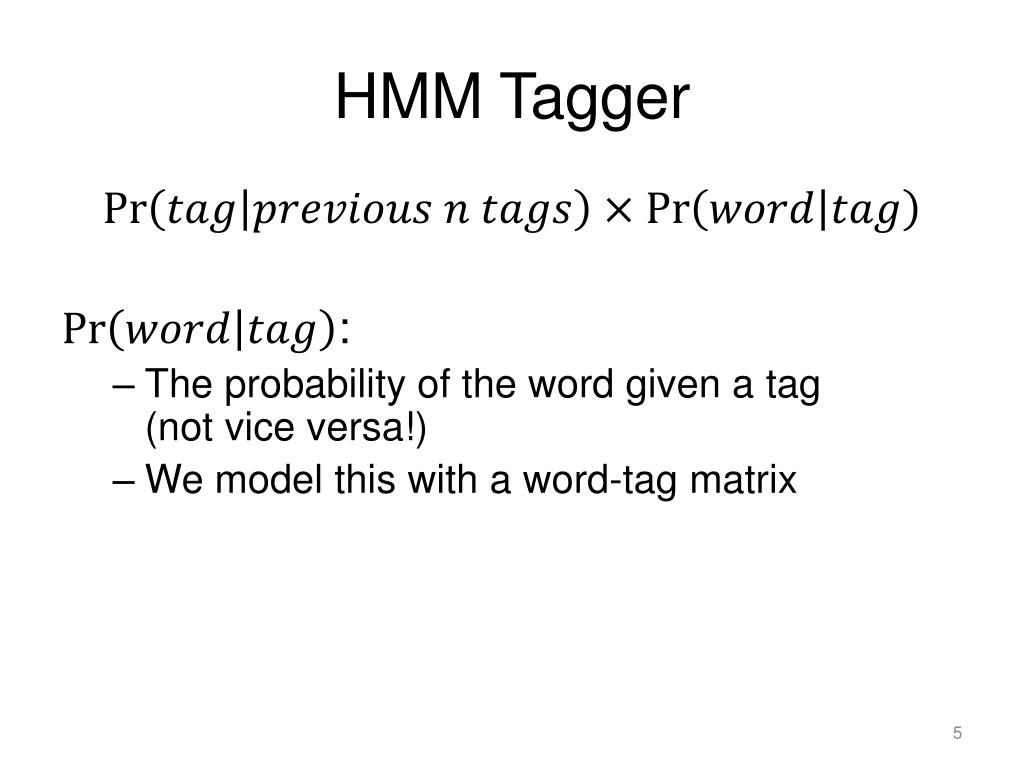

More details on HMM can be found in the excellent book "Speech and Language Processing", by Dan Jurafsky and James H. However, viterbi algorithem takes O(N * K^m) for a m-gram HMM model. Its advantage is its run time: if the number of optional tags is K and the length of the words sequence is N, than the inference (prediction) phase (calculating the probability for each sequance of tags, and return the most probability one) of a brute-force method takes O(K^N). Note that this is not the preferred solution for these problems (see other smoothing methods such as Kneser-Ney Smoothing)Ī dynamic programming algorithm for finding the most likely sequence of hidden states (=tags in our case). Add-1 smoothing is a technique for dealing these problems by assign each count the value 1. Furthermore, pairs that does appear in training set will get higher probability - and hence there's a risk to overfitting. Since our models depends on counting apperance of pairs of words (because we use bigram HMM), in the training set, if some pair from test set won't appear there, then we assign probability 0 to this "unseen event", and hence assign probability 0 to the current sequence which we try to assign to it a probability. Hopefully, after the conversion, many unknown words will appear also in the training set. The idea is to convert words from both training and tests sets into speficic predifined words. Hence it's harder to predict thier tag since we relay heavly on the apperance of the words in the training set.Ī method for dealing with unknown words. Words that doesn't appear in the training set but does appear in the test set. This probability is called "transition probability" The probability to genertate the next tag depends only in the n last chosen tags (this assumption is called "Markov assumption", and in this program we chose n=2, aka bigram HMM).This probability is called "emmision probability". The probability to genertate a word depends only in the current chosen tag.In the context of POS tagging, this model makes two assumptions: Hidden Markov Model is a probabilistic sequence classifier, and it's also a generative model. In this program we took as a baseline the MLE (most likelihood estimator) model, which simply assign each word the tag that apperas with it the most in the training set.

If our next (and more complicated) models will not give us better results than this baseline - we should reconsider using them (or check if we have implementation mistakes). Key concepts and terms used in this programĪ very simple modle which designed to provide a simple solution for the task. Therefore POS tagging is often a first step in solving other tasks in NLP, such as Semantic Role Labeling and Machine Translation. Part of speech tag of a word can expose the context in which the word is used. Why POS tagging is an important task in NLP? Note that the results might change a little in each run since the program use viterbi algorithm which It contains the errors results and a chart which visualizes it. Word_counting.py - contains data structures that stores word and tag counts for the text.Īn Excel file is saved in the current directory.

Pseudowords.py - contains function that maps words to their pseudowords. Models.py - implementation of baseline and HMM models. Main.py - extracts the data and runs the program.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed